Mastering AI: The 7 Essential Terms You Need to Know

Artificial intelligence (AI) is no longer a far-off idea. It’s all around us, from your smart toothbrush to the systems that run big companies. But AI is changing so fast, it’s tough to keep up, even for folks who work in tech. That’s why I’ve picked out seven key AI terms that are important to know as AI keeps growing. How many do you already know? Let’s find out.

AI is showing up in every industry. It’s automating simple jobs and helping us discover new things. Knowing the basics of how AI works is super important now. This guide breaks down seven main AI terms. We’ll explain them simply and show you how they’re used. If you like tech, work in business, or are just curious about what’s next, this will help you understand AI better.

Understanding the Core: Agentic AI and Reasoning Models

Many smart AI systems are built on the idea that they can act on their own and think things through. This section covers agentic AI and the big reasoning models that power them. It shows how AI is moving past just answering questions to solving hard problems.

1. What are AI Agents?

AI agents can figure things out and do tasks on their own to reach a goal. Think of them as AI that can act independently, unlike a simple chatbot that just waits for your next command. AI agents follow a cycle. First, they sense what’s around them. Then, they think about what to do next to achieve their goal. After deciding, they take action. Finally, they see what happened because of their action. This loop keeps repeating.

These agents can do many different jobs. An AI agent could act as your travel planner, booking your next trip. It might also work as a data analyst, finding trends in business reports. Or, it could even be a DevOps engineer, checking system logs for problems and fixing them. AI agents are often built using a special type of large language model called a large reasoning model.

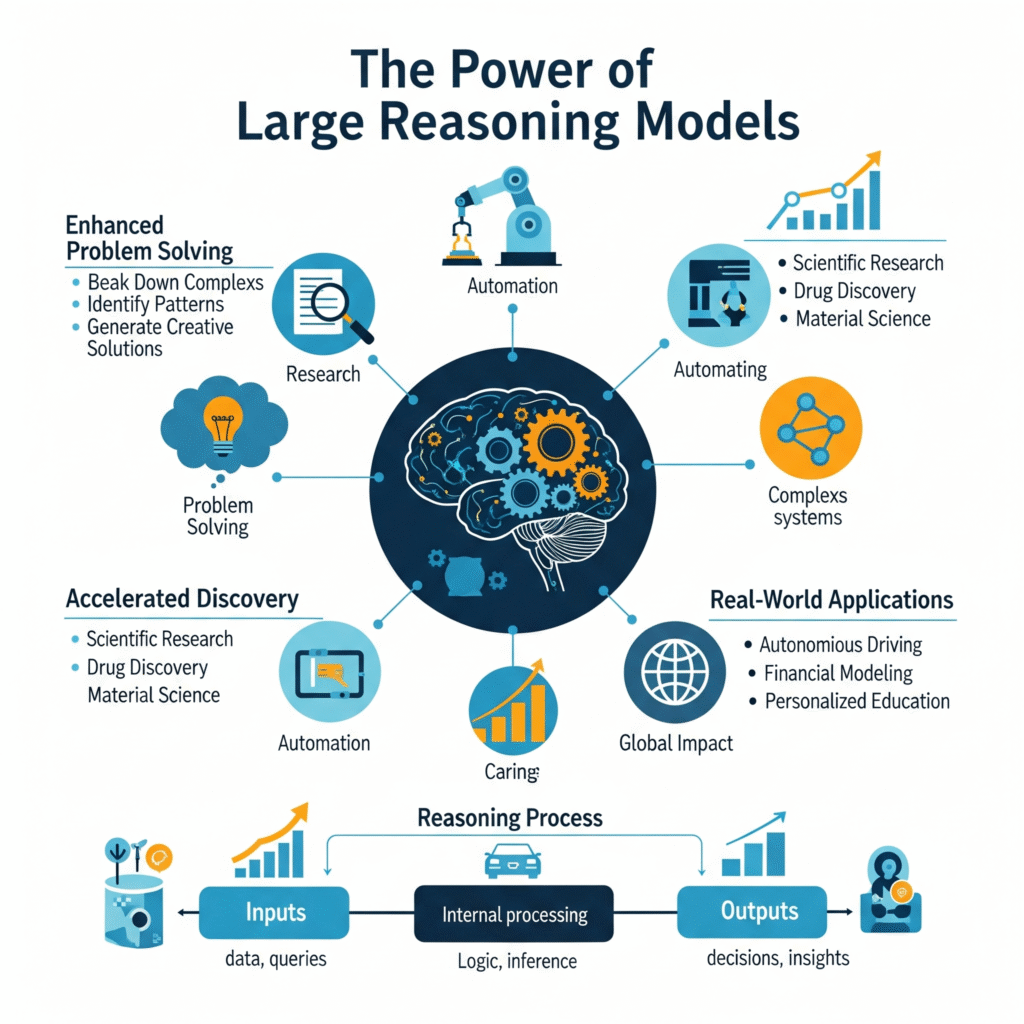

2. The Power of Large Reasoning Models

Large reasoning models are special large language models (LLMs). They are fine-tuned to focus on how to solve problems step-by-step. Regular LLMs just give an answer right away. Reasoning models, however, are trained to work through problems slowly. This is just what agents need to plan tasks that have many steps.

These models learn by working on problems with answers we know are correct. This includes math problems or computer code that can be checked. Through a process called reinforcement learning, the model gets better at creating sequences of thought that lead to the right final answer. So, when you see a chatbot pause before answering and say “thinking,” that’s a reasoning model at work. It’s building a chain of thought inside to break down the problem before it gives you an answer.

3. Storing and Retrieving Knowledge: Vector Databases and RAG

For AI to be truly smart, it needs to handle lots of information well. This part looks at how vector databases and Retrieval Augmented Generation (RAG) let AI understand context and find the right data. This makes AI much more useful.

The Mechanics of Vector Databases

In a vector database, we don’t just store data like text or pictures as simple files. Instead, we use an embedding model. This model turns data, like images, into a vector. A vector is basically a long list of numbers. This list of numbers captures the meaning of the data.

The cool part is that we can search these databases using math. We look for vectors that are “close” to each other. This helps us find content that has similar meaning. Imagine you start with a photo of a mountain. The embedding model turns this picture into a multidimensional list of numbers. Then, we can search for things that are similar to that mountain picture by finding the closest numbers in the database. This could be similar text articles or even similar music files.

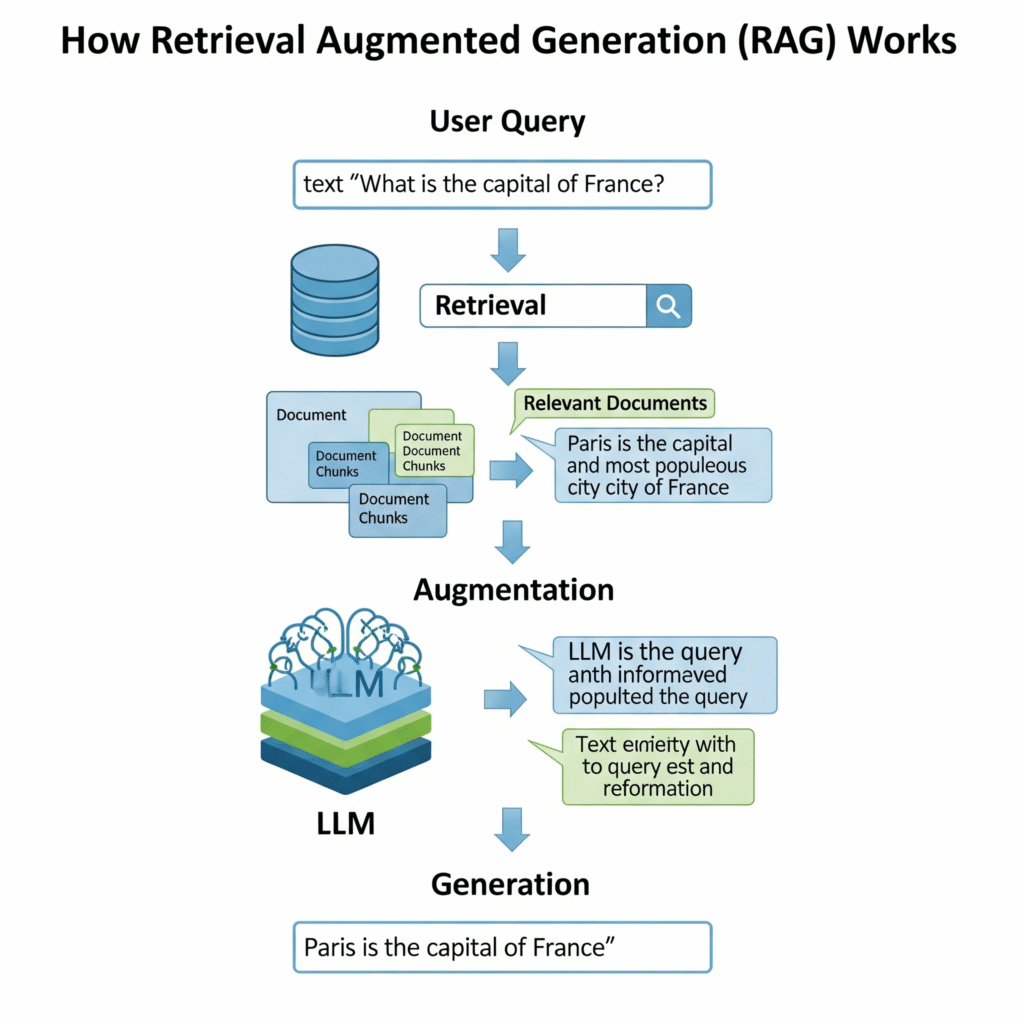

How Retrieval Augmented Generation (RAG) Works

RAG uses these vector databases. It helps make the questions we ask an LLM much better. A RAG system starts with a prompt from you. It turns that prompt into a vector using an embedding model. Then, it searches the vector database for similar information.

The database sends back the related information. This information is then added to your original prompt. So, if you ask a question about company policy, the RAG system will find the right part of the employee handbook. It will put that part into the prompt before sending it to the LLM. This helps the LLM give you a more accurate and informed answer.

4. Enabling Advanced Interactions: MCP and Mixture of Experts

As AI connects more with outside systems and models get bigger, new ways to manage these connections are needed. We’ll look at Model Context Protocol (MCP) for talking to systems in a standard way. We’ll also cover Mixture of Experts (MoE) for making models work fast and efficiently.

Standardizing AI-System Interaction with MCP

Model Context Protocol, or MCP, is a new standard. It helps LLMs talk to outside data sources and tools. For LLMs to be really helpful, they need to connect to things like databases, code storage, or even email servers. MCP makes these connections the same way every time.

This means developers don’t have to build custom ways for each new tool. MCP gives AI a standard path to access your systems. An MCP server acts like a translator. It tells the AI exactly how to connect and use any of your tools. This makes AI integration much simpler and more reliable.

5. Efficiency Through Mixture of Experts (MoE)

The idea of Mixture of Experts (MoE) has been around since 1991. But it’s more important now than ever. MoE breaks down a large language model into many smaller “experts.” These experts are like mini AI brains that are good at specific things.

A routing system then decides which experts are needed for a particular job. Only those experts get used. After the experts do their work, their results are combined. This process uses math to merge the outputs into one final result. It’s a very efficient way to make models bigger and smarter without spending a lot more on computer power. Models using MoE can have billions of total parts, but only use a small number of them for any given task.

6. The Horizon of AI: From AGI to ASI

While AI is changing things today, the big goals of AI research are about reaching human-level intelligence and even going beyond it. This part briefly mentions the ideas of Artificial General Intelligence (AGI) and Artificial Superintelligence (ASI).

7. The Quest for Artificial General Intelligence (AGI)

AGI is a type of AI that could do any thinking task a human can. It’s something researchers are working towards. Right now, AGI is still a theoretical idea. We don’t have it yet, but it’s a major goal for many AI labs.

The Theoretical Realm of Artificial Superintelligence (ASI)

ASI is a step beyond AGI. It’s an AI that would be much smarter than any human. ASI systems could potentially improve themselves over and over again. Imagine an AI that could rewrite its own code to become smarter, in a never-ending loop. This kind of AI could solve our biggest problems or create new ones we can’t even imagine. It’s smart to keep ASI in mind as AI continues to develop.

Conclusion: Staying Ahead in the AI Revolution

The world of AI is always changing. New terms and ideas pop up quickly. Knowing about agentic AI, reasoning models, vector databases, RAG, MCP, and MoE gives you a good understanding of what AI can do today. While AGI and ASI are still just ideas, they show where AI might be headed in the future. By learning these key terms, you’ll be better prepared to understand and use the amazing power of artificial intelligence. What AI term do you think is important that I missed? Let me know in the comments.